I've not posted many entries in the past year-- practically none at all in fact. There's something entirely different about coding and writing prose that makes it difficult to shift between one and the other. Nor have I been particularly good (or even acceptably bad) about responding to questions and replies, especially those asking where Daala is, or where Daala is going in the era of AOM.

In the meantime, though, Jean-Marc has written another demo-style post that revisits many of the techniques we've tried out in Daala while applying the benefit of hindsight. I've got a number of my own comments I want to make about what he's written, but in the meantime it's an excellent technical summary.

I assume folks who follow video codecs and digital media have already noticed the brand new Alliance for Open Media jointly announced by Amazon, Cisco, Google, Intel, Microsoft, Mozilla and Netflix. I expect the list of member companies to grow somewhat in the near future.

One thing that's come up several times today: People contacting Xiph to see if we're worried this detracts from the IETF's NETVC codec effort. The way the aomedia.org website reads right now, it might sound as if this is competing development. It's not; it's something quite different and complementary.

Open source codec developers need a place to collaborate on and share patent analysis in a forum protected by client-attorney privilege, something the IETF can't provide. AOMedia is to be that forum. I'm sure some development discussion will happen there, probably quite a bit in fact, but pooled IP review is the reason it exists.

It's also probably accurate to view the Alliance for Open Media (the Rebel Alliance?) as part of an industry pushback against the licensing lunacy made obvious by HEVCAdvance. Dan Rayburn at Streaming Media reports a third HEVC licensing pool is about to surface. To-date, we've not yet seen licensing terms on more than half of the known HEVC patents out there.

In any case, HEVC is becoming rather expensive, and yet increasingly uncertain licensing-wise. Licensing uncertainty gives responsible companies the tummy troubles. Some of the largest companies in the world are seriously considering starting over rather than bet on the mess...

Is this, at long last, what a tipping point feels like?

Oh, and one more thing--

As of today, just after Microsoft announced its membership in the Open Media Alliance, they also quietly changed the internal development status of Vorbis, Opus, WebM and VP9 to indicate they intend to ship all of the above in the new Windows Edge browser. Cue spooky X-files theme music.

Cisco introduces the Thor video codec

Aug. 11th, 2015 04:29 pmI want to mention this on its own, because it's a big deal, and so that other news items don't steal its thunder: Cisco has begun blogging about their own FOSS video codec project, also being submitted as an initial input to the IETF NetVC video codec working group effort:

"World, Meet Thor – a Project to Hammer Out a Royalty Free Video Codec"

Heh. Even an appropriate almost-us-like nutty headline for the post. I approve :-)

In a bit, I'll write more about what's been going on in the video codec realm in general. Folks have especially been asking lots of questions about HEVCAdvance licensing recently. But it's not their moment right now. Bask in the glow of more open development goodness, we'll get to talking about the 'other side' in a bit.

Had this happened next week, I'd have thought it was an April Fools' joke.

Out of nowhere, a new patent licensing group just announced it has formed a second, competing patent pool for HEVC that is independent of MPEG LA. And they apparently haven't decided what their pricing will be... maybe they'll have a fee structure ready in a few months.

Video on the Net (and let's be clear-- video's future is the Net) already suffers endless technology licensing problems. And the industry's solution is apparently even more licensing.

In case you've been living in a cave, Google has been trying to establish VP9 as a royalty- and strings-free alternative (new version release candidate just out this week!), and NetVC, our own next-next-generation royalty-free video codec, was just conditionally approved as an IETF working group on Tuesday and we'll be submitting our Daala codec as an input to the standardization process. The biggest practical question surrounding both efforts is 'how can you possibly keep up with the MPEG behemoth'?

Apparently all we have to do is stand back and let the dominant players commit suicide while they dance around Schroedinger's Cash Box.

Codec development is often an exercise in tracking down examples of "that's funny... why is it doing that?" The usual hope is that unexpected behaviors spring from a simple bug, and finding bugs is like finding free performance. Fix the bug, and things usually work better.

Often, though, hunting down the 'bug' is a frustrating exercise in finding that the code is not misbehaving at all; it's functioning exactly as designed. Then the question becomes a thornier issue of determining if the design is broken, and if so, how to fix it. If it's fixable. And the fix is worth it.

A Fabulous Daala Holiday Update

Dec. 23rd, 2014 04:09 pmBefore we get into the update itself, yes, the level of magenta in that banner image got away from me just a bit. Then it was just begging for inappropriate abuse of a font...

Ahem.

Hey everyone! I just posted a Daala update that mostly has to do with still image performance improvements (yes, still image in a video codec. Go read it to find out why!). The update includes metric plots showing our improvement on objective metrics over the past year and relative to other codecs. Since objective metrics are only of limited use, there's also side-by-side interactive image comparisons against jpeg, vp8, vp9, x264 and x265.

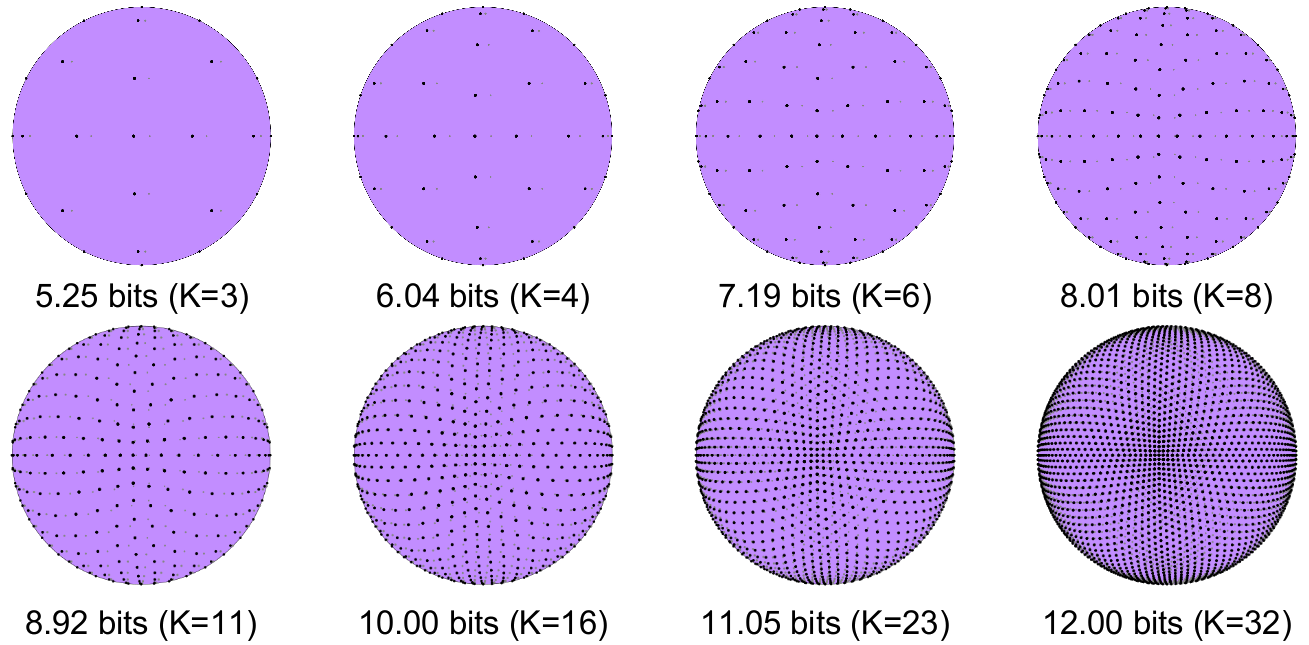

The update text (and demo code) was originally for a July update, as still image work was mostly in the beginning of the year. That update get held up and hadn't been released officially, though it had been discovered by and discussed at forums like doom9. I regenerated the metrics and image runs to use latest versions of all the codecs involved (only Daala and x265 improved) for this official better-late-than-never progress report!Jean-Marc has finished the sixth Daala demo page, this one about PVQ, the foundation of our encoding scheme in both Daala and Opus.

(I suppose this also means we've finally settled on what the acronym 'PVQ' stands for: Perceptual Vector Quantization. It's based on, and expanded from, an older technique called Pyramid Vector Quantization, and we'd kept using 'PVQ' for years even though our encoding space was actually spherical. I'd suggested we call it 'Pspherical Vector Quantization' with a silent P so that we could keep the acronym, and that name appears in some of my slide decks. Don't get confused, it's all the same thing!)

Intra paint is not a technique that's part of the original Daala plan and, as of right now, we're not currently using it in Daala. Jean-Marc envisioned it as a simpler, potentially more effective replacement for intra-prediction. That didn't quite work out-- but it has useful and visually pleasing qualities that, of all things, make it an interesting postprocessing filter, especially for deringing.

Several people have said 'that should be an Instagram filter!' I'm sure Facebook could shake a few million loose for us to make that happen ;-)

The spring 'conference tour' season is finally coming to a close. I'm a bit surprised by the number of people who have asked for my slide decks. Well, slide deck actually, since of course I mostly reused the same slides across the talks this spring.

In any case, I 've posted the latest iteration (from my presentation at the just-finished Google VP9 Summit) for anyone who's interested:

https://people.xiph.org/~xiphmont/demo/daala/daala-vp9summit-20140606.pdf

Comments on Cisco, Mozilla, and H.264

Oct. 30th, 2013 08:35 amPlease note: This is not a statement on behalf of Xiph.Org or Mozilla. I speak here for myself, my team, and other developers who share my views on an open web.

If you haven't seen today's announcements from Cisco and Mozilla regarding H.264, you'll want to read them before continuing.

Let's state the obvious with respect to VP8 vs H.264: We lost, and we're admitting defeat. Cisco is providing a path for orderly retreat that leaves supporters of an open web in a strong enough position to face the next battle, so we're taking it.

By endorsing Cisco's plan, there's no getting around the fact that we've caved on our principles. That said, principles can't replace being in a practical position to make a difference in the future. With Cisco making H.264 available at no cost, holding out against H.264 in WebRTC makes even less sense than holding out after Google shipped H.264 in the video tag. At least under these terms, H.264 will be available at no cost to Mozilla and to any other piece of software that uses the downloadable plugin.

Cisco's license hack is obvious enough if you have the money: There's a yearly cap on total payments for any given licensed H.264 product. This year the cap is $6.5M. Any company that pays the cap each year can distribute as many copies as they want. There are still terms and restrictions on how the distribution gets done, but Cisco will be handling that (and only Cisco will be allowed to build and distribute these copies without a separate license).

Once you or your applications download the prebuilt codec blob from Cisco, you're allowed to use that specific blob for anything you want so long as you don't modify it or give it to anyone else. H.264 codecs for everyone! Cisco has committed to these blobs being available for just about every platform and architecture you can think of. "IBM S/360? Yes, please!"

This arrangement has obvious short-term benefits. Open source projects get licensed (if partial and restricted) access to H.264, and users don't feel like they're being held hostage in the ongoing battle between the open web and closed codecs. Firefox and other projects can install H.264 support (via Cisco), which is a big deal.

That said, today's arrangement is at best a stopgap, and it doesn't change much on the ground. How many people don't already have H.264 codecs on their machines, legit or otherwise? Enthusiasts and professionals alike have long paid little attention to licensing. Even most businesses today don't know and don't care if the codecs they use are properly licensed[1]. The entire codec market has been operating under a kind of 'Don't Ask, Don't Tell' policy for the past 15 years and I doubt the MPEG LA minds. It's helped H.264 become ubiquitous, and the LA can still enforce the brass tacks of the license when it's to their competitive advantage (or rather, anti-competitive advantage; they're a legally protected monopoly after all).

The mere presence of a negotiated license divides the Web into camps of differing privilege. Today's agreement is actually a good example; x264 (and every other open source implementation of an encumbered codec) are cut out. They're not included in this agreement, and there's no way they could be. As it is, giving away just this single, officially-blessed H.264 blob is going to cost Cisco $65M over the next decade[2]. Is it any wonder video is struggling to become a first-class feature of the Web? Licensing caused this problem, and more licensing is not a solution.

The giveaway also solves nothing long-term. H.264 is already considered 'on the way out' by MPEG, and today's announcement doesn't address any licensing issues surrounding the next generation of video codecs. We've merely kicked the can down the road and set a dangerous precedent for next time around. And there will be a next time around.

So, we're focusing on being ready.

Fully free and open codecs are in a better position today than before Google opened VP8 in 2010. Last year we completed standardization of Opus, our popular state-of-the-art audio codec (which also happens to be the best audio codec in the world at the moment). Now, Xiph.Org and Mozilla are building Daala, a next-generation solution for video.

Like Opus, Daala is a novel approach to codec design. It aims not to be competitive, but to win outright. Also like Opus, it will carry no royalties and no usage restrictions; anyone will be permitted to use the Daala codec for anything without securing a license, just like the Web itself and every other core technology on the Internet.

That's a real solution that can make everyone happy.

I can't resist a little codec fantasy football.

MPEG HEVC licensing isn't set yet. It will be interesting to watch the negotiations if Cisco's H.264 giveaway plan is wildly successful. In the future, could nearly every legal copy of HEVC come as a binary blob from one Internet source under one cap? I doubt that possibility is something the MPEG LA has considered, and they may consider it now that someone is actually trying to pull it off with H.264. Perhaps in five years, even cameras and televisions will download a software codec to avoid paying monopoly rents. Sillier things have happened given sufficient profit motive.

Or maybe they'll build in a free, legally uncomplicated copy of Daala instead. Dare to dream.

—Monty Montgomery <monty@xiph.org> and others

October 30, 2013

[1] According to the MPEG LA there are only 1276

H.264 licensees (of all kinds) worldwide.

Source: http://www.mpegla.com/main/programs/AVC/Pages/Licensees.aspx

[2] Cisco's cap liability is $65M over ten years only if

the cap amount doesn't change. Historically, the cap has increased

every few years, depending on the end-use license. For non-commercial

content delivery products, the cap was $3.5M for 2006-7, $4.25M for

2008-9, $5M for 2010 and is currently $6.5M for 2011-15.

Source: http://www.mpegla.com/main/programs/avc/Documents/AVC_TermsSummary.pdf

Xiph and Mozilla's Greg Maxwell (or as Dave has been teasing, 'Professor Max') gave a good thorough presentation on Opus and progress being made on Daala at the 2013 GStreamer conference in Edinburgh on Wednesday. Unlike many of our presentations, we were more careful to get complete video for this one.

If you've been a fan of the Daala demo updates, his talk touches on some of the topics of upcoming demos, specifically PVQ, the range coder, and motion compensation. Obviously, I'll be going into more detail on those in the actual demo pages.

Another new demo, another new technique specific to Daala: frequency domain prediction of the chroma planes from the luma plane!

Predicting the chroma planes from the luma plane isn't a brand-new idea. Still, we're both the only codec to actually be deploying it, and we're doing it entirely in the frequency domain (which is novel).

I've just posted part 3 in my demo series introducing the Daala video codec. This one is kind of a long one, mainly because I think it's one of the only really detailed presentations of a technique Jean-Marc Valin of Xiph invented and first introduced in the Opus audio codec: 'TF' aka Time/Frequency resolution switching.

Even better... while I was documenting TF for posterity, I spotted a possible improvement. So, I've tossed in documentation of a brand new technique as well!

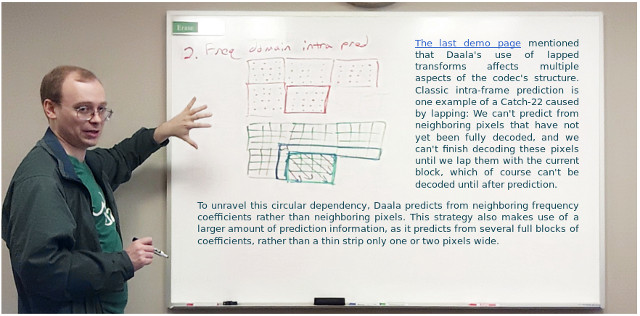

Now up, part two of the introduction I'm writing for Xiph's upcoming video codec Daala. The fact that we're using lapped transforms means we've had to apply a little cleverness to intra prediction, and so we've opted to do it in the frequency domain...

Xiph.Org has been working on Daala, a new video codec for some time now, though Opus work had overshadowed it until just recently. With Opus finalized and much of the mop-up work well in hand, Daala development has taken center stage.

I've started work on 'demo' pages for Daala, just like I've done demos of other Xiph development projects. Daala aims to be rather different from other video codecs (and it's the first from-scratch design attempt in a while), so the first few demo pages are going to be mostly concerned with what's new and different in Daala.

I've finished the first 'demo' page (about Daala's lapped transforms), so if you're interested in video coding technology, go have a look!